In Kubernetes, not all pods are equally important. Some are critical and must run continuously, while others can wait.

Essentially, pods have priorities.

When there’s a resource crunch in the cluster, higher-priority pods get resources first, pushing out (evicting) lower-priority ones if needed. The pod priority and preemption policy configures this eviction.

Understanding how pod priorities work is essential for managing your workloads effectively, ensuring that the most important ones always have the necessary resources.

As we progress, we will learn how the preemption policy works, along with pod priority and priority classes.

Here is a quick summary for you.

Table of Contents

- Resource Repo

- Pod Priority

- Pod Priority Class

- preemptionPolicy

- system-node-critical and system-cluster-critical Priority Classes

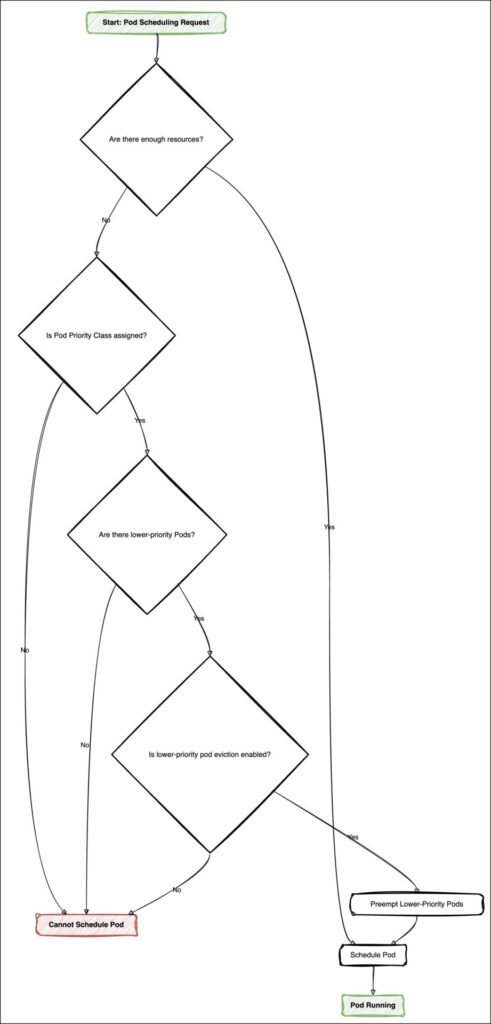

- A high-level overview of how pod scheduling priority works

- Conclusion

Resource Repo

To follow hands-on exercises, clone this git repo and change to preemptionPolicy directory.

> git clone https://github.com/decisivedevops/blog-resources.git

> cd blog-resources/k8s/preemptionPolicyPod Priority

Pod priority is a numerical priority assigned to each pod. This priority value determines the importance of a pod relative to other pods.

When a pod is launched, the Priority admission controller considers the pod’s priority value and schedules the pod accordingly. Higher priority pods get placed first, and lower ones are kept in the scheduling queue (This queuing only happens during the resource shortage within the cluster).

Let’s explore this practically.

- Apply the simple busybox deployment. Note we have not added any explicit priority configuration here yet.

> kubectl apply -f hp-deployment.yml- Once the pod is launched, get the current priority for the pod.

> POD=$(kubectl get pod -l app=hp-busybox -o jsonpath="{.items[0].metadata.name}")

> kubectl get pod $POD -o yaml | grep priority- So it’s

0. That’s the default value given to a non-system pod.

Pod Priority Class

So far, we have gotten the default priority value to be 0. To configure this integer value, we need a priority class.

A priority class is a non-namespaced object (you don’t need to define the creation namespace while creating a priority class) that defines and allocates the priority value to a pod.

Pod priority can be any 32-bit integer value smaller than or equal to 1 billion. This gives us the range from -2147483648 to 1000000000 (both values inclusive).

Let’s create a priority class and apply that to the previous deployment.

- Create

high-priorityclass.

> kubectl apply -f hp-class.yml

priorityclass.scheduling.k8s.io/high-priority createdHere is a quick rundown of hp-class.yml:

- **kind: PriorityClass**: Indicates the resource type as PriorityClass.

- **metadata: name: high-priority**: Sets the name of the PriorityClass to "high-priority".

- **value: 1000000**: Assigns a priority value of 1,000,000, making it a high priority.

- **globalDefault: false**: Indicates that this PriorityClass is not the default for all pods.

- **description**: Provides a description, stating this class is for important pods only.- Update the

hp-deployment.ymlto include the created priority class (priorityClassName: high-priority).

spec:

priorityClassName: high-priority

containers:

- name: busybox

image: busybox:1.36- Reapply

hp-deployment.yml.

> kubectl apply -f hp-deployment.yml

deployment.apps/busybox-deployment configured- Get the new priority value.

> POD=$(kubectl get pod -l app=hp-busybox -o jsonpath="{.items[0].metadata.name}")

> kubectl get pod $POD -o yaml | grep priority- Ok, so we got our high-priority pod. Let’s test how it works.

Here’s the plan to test how pod priority works.

- We have two deployments,

hp-busybox-deploymentandlp-busybox-deployment.

–hp-busybox-deploymenthashigh-prioritypriority class assigned with a value of1000000.

–lp-busybox-deploymenthas default priority, i.e.0 - We will assign resource requests and limits to both deployments to create a resource crunch. (I will assign 4Gi for memory allocation, as I run this cluster on a small VM.)

- First, apply the

lp-busybox-deployment. The pod should start. - Next, apply the

hp-busybox-deployment. - Now, the cluster should have a memory shortage since both deployments require 4Gi memory.

- Observe what happens to both pods

hp-busyboxandlp-busybox.

Action time.

# first, delete the hp-deployment.yml created in previous section, if running

> kubectl delete -f hp-deployment.yml

# apply lp-deployment.yml

> kubectl apply -f lp-deployment.yml

# get the pod status

> kubectl get pod

# apply the hp-deployment.yml

> kubectl apply -f hp-deployment.yml

# get the pod status

> kubectl get pod

NAME READY STATUS RESTARTS AGE

hp-busybox-deployment-7d57bbf456-5hfqt 1/1 Running 0 33s

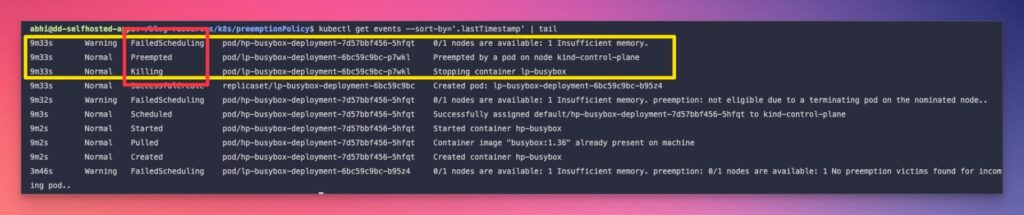

lp-busybox-deployment-6bc59c9bc-b95z4 0/1 Pending 0 33s- So

lp-busyboxpod got pending, and a newhp-busyboxis running. - Why? Here’s what happened.

– There was a resource shortage in the cluster since both pods required 4Gi memory.

– Priority admission controller checked the pod priority value assigned to both pods.

–hp-busyboxwon because it haspriorityClassName: high-prioritywith higher priority than default (0).

– So kubelet movedlp-busyboxin pending state and started a higher priority pod. - Moving to a pending state is essentially evicting the lower-priority pods. We can see this in the events.

> kubectl get events --sort-by='.lastTimestamp'

- This evicting behavior is controlled by

preemptionPolicy.

preemptionPolicy

Until now, we have managed to evict (preempt) the lower priority pod when there is a resource shortage and a higher priority pod is launched.

Note that we have not explicitly defined this behavior in any configuration so far.

Before we set it explicitly, let’s quickly see what exactly is preemptionPolicy.

Essentialy, preemptionPolicy controls if the lower priority pods are to be preempted (evicted) when there is a resource shortage and higher priority pods are launched.

preemptionPolicy is set in PriorityClass definition.

By default preemptionPolicy is set to PreemptLowerPriority (above observed behavior).

Let’s configure this explicitly and follow how pods are managed this time.

As usual, here’s the plan.

- Create two

PriorityClassobjects.

–nonpreempting-priority-classwill havepreemptionPolicyconfigured asNever

–preempting-priority-classwill havepreemptionPolicyconfigured asPreemptLowerPriority - Test these two classes on the same test case we did previously using

hp-busybox-deploymentandlp-busybox-deployment, observe behavior with both pods.

# clean up

> kubectl delete -f hp-deployment.yml

> kubectl delete -f lp-deployment.yml

# create both PriorityClasses

> kubectl apply -f nonpreempting-priority-class.yml

priorityclass.scheduling.k8s.io/nonpreempting-priority-class created

> kubectl apply -f preempting-priority-class.yml

priorityclass.scheduling.k8s.io/preempting-priority-class created

# apply lp-deployment

> kubectl apply -f lp-deployment.yml

# get the pod status

> kubectl get pod

# update the hp-deployment.yml to include nonpreempting-priority-class

# spec:

# priorityClassName: nonpreempting-priority-class

# containers:

# - name: hp-busybox

# image: busybox:1.36

# apply the hp-deployment.yml

> kubectl apply -f hp-deployment.yml

# get the pod status

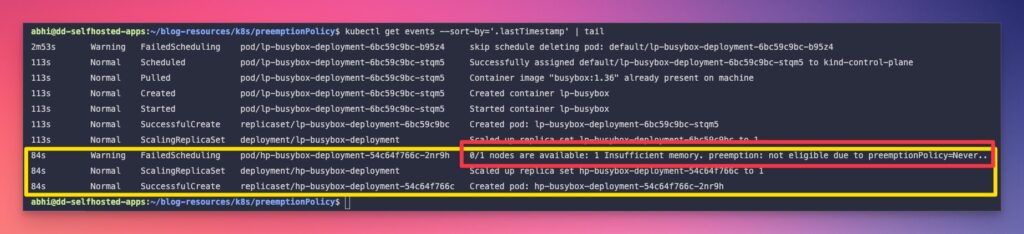

> kubectl get pod- Now

hp-busyboxis kept pending despite having a higher priority thanlp-busybox. - Why? Take a look at events.

> kubectl get events --sort-by='.lastTimestamp'

- You can set

priorityClassName: preempting-priority-classinhp-deployment.yml, reapply, and observe what happens to thelp-busyboxpod.

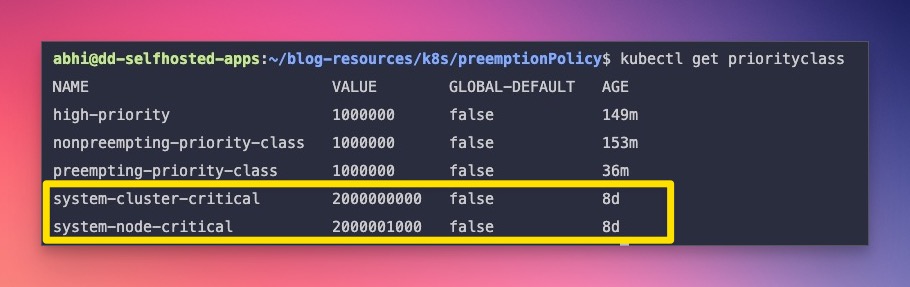

system-node-critical and system-cluster-critical Priority Classes

There are two priority classes created along with the cluster creation.

- system-node-critical

- system-cluster-critical

You can get these classes using the below command.

> kubectl get priorityclass

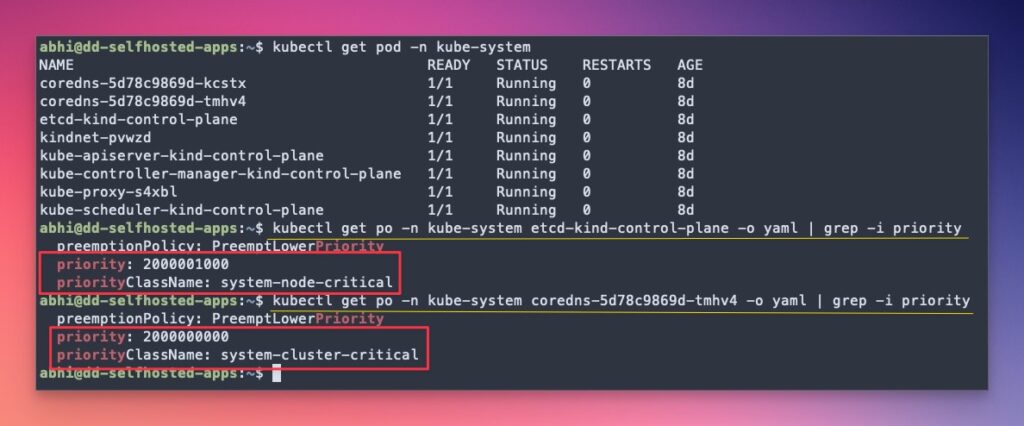

> kubectl get pod -n kube-system

> kubectl get po -n kube-system etcd-kind-control-plane -o yaml | grep -i priority

> kubectl get po -n kube-system coredns-5d78c9869d-tmhv4 -o yaml | grep -i priority

A high-level overview of how pod scheduling priority works

Conclusion

As we have explored Pod Priority, PriorityClass, and Preemption practically, using them in real-world clusters to safeguard your critical applications from being evicted during a resource crunch is essential.

Here’s a quick mind workout for you.

Explore the QoS concept in Kubernetes and figure out how Pod QoS relates to Pod Priority & Preemption. (I will be covering this in upcoming tutorials.)

🙏 Thanks for your time and attention all the way through!

Let me know your thoughts/ questions in the comments below.

If this guide sparked a new idea,

a question, or desire to collaborate,

I’d love to hear from you:

🔗 Upwork

Till we meet again, keep making waves.🌊 🚀